Title: Finalizes: Mastering Project Completion

In a rapidly evolving digital landscape, the successful conclusion of complex undertakings is a hallmark of visionary leadership. The year 2025 marks a pivotal moment as the European Union (EU) **finalizes** its groundbreaking AI Liability Law, a world-first legislative act that is set to reshape the global tech industry. This momentous achievement isn’t just a political milestone; it represents a comprehensive effort to bring clarity and accountability to the burgeoning field of artificial intelligence. As the EU **finalizes** this critical framework, it addresses pressing ethical concerns, economic implications, and the very future of human-AI interaction, sparking intense debate across continents. This article delves into the intricacies of this landmark legislation, exploring its provisions, global impact, and the meticulous process that allowed the EU to **finalizes** such a monumental project.

Understanding the EU’s AI Liability Framework as it Finalizes

The EU’s journey to regulate artificial intelligence has been a marathon, not a sprint. The AI Liability Law, set to be fully operational by 2025, represents the culmination of years of deliberation, negotiation, and expert consultation. It’s a testament to the EU’s commitment to creating a safe and trustworthy digital environment, ensuring that the benefits of AI are harnessed responsibly.

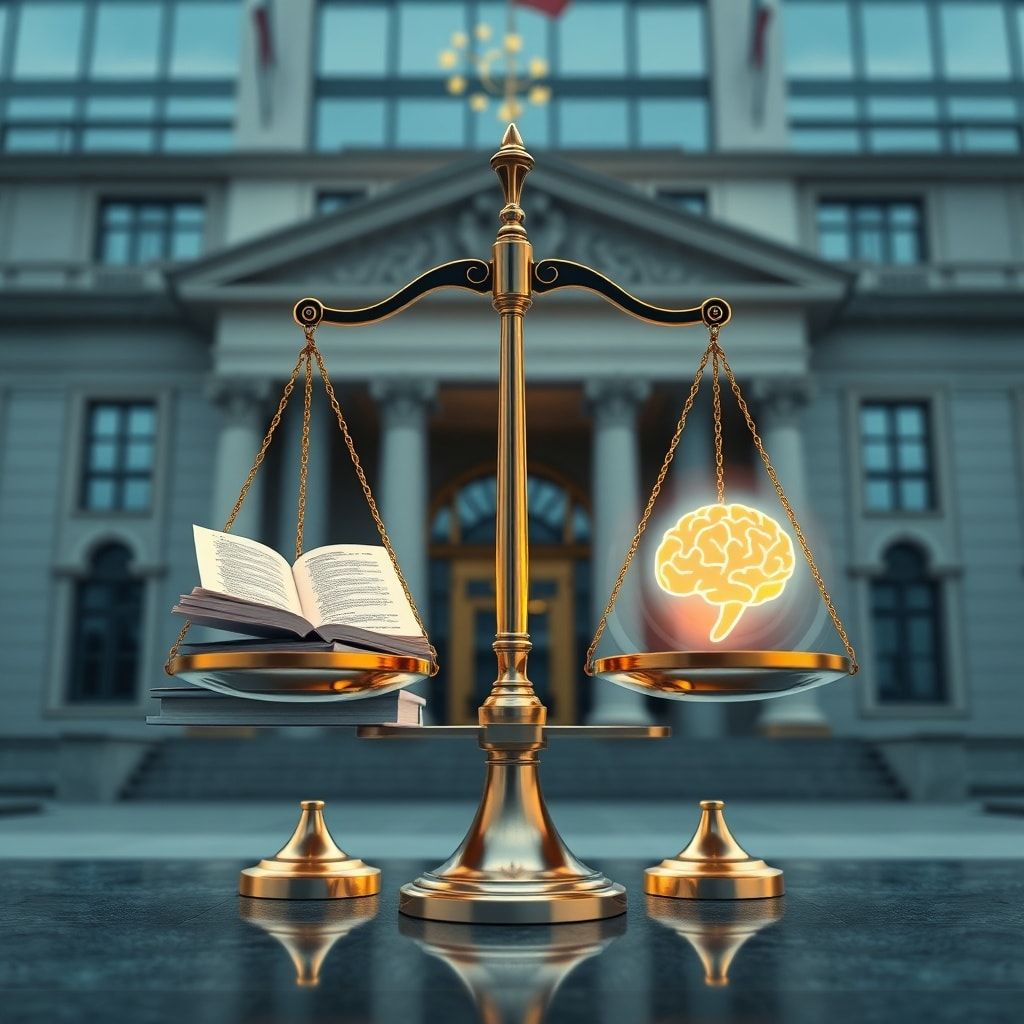

Defining AI Liability: What the EU Finalizes

At its core, the EU AI Liability Law aims to clarify who is responsible when an AI system causes harm. This is a critical distinction in an age where algorithms make decisions, operate machinery, and even influence human behavior. The law specifically targets both high-risk AI applications—such as those used in medical devices, autonomous vehicles, or critical infrastructure—and general-purpose AI systems, which are increasingly integrated into various products and services. The EU **finalizes** a robust definition of “harm,” encompassing not only physical injury or property damage but also significant non-material damage like discrimination, privacy violations, or reputational harm. A key provision shifts the burden of proof in certain cases, making it easier for victims to seek redress by requiring AI providers to demonstrate that their systems were not at fault, rather than the victim having to prove negligence. This aspect of the law, carefully refined over multiple drafts, ensures that accountability is firmly placed on those who develop and deploy AI.

Key Provisions and Protections the EU Finalizes

The newly **finalized** law introduces several key provisions designed to protect consumers and foster a more transparent AI ecosystem. It mandates strict transparency obligations for AI developers and deployers, requiring them to provide clear information about how their systems work, their capabilities, and their limitations. Data protection is heavily emphasized, building upon existing GDPR principles to ensure that personal data used by AI systems is handled ethically and securely. Furthermore, the law outlines specific requirements for human oversight, ensuring that a human remains in control and can intervene when necessary, especially in high-risk scenarios. Penalties for non-compliance are substantial, aiming to deter companies from flouting the regulations. The iterative process to **finalizes** these details involved extensive feedback from industry, civil society, and legal experts, ensuring a balanced yet stringent approach. For instance, early drafts faced criticism for being too broad, leading to more precise definitions and exemptions for certain low-risk applications, demonstrating the EU’s adaptive strategy to **finalizes** the best possible outcome.

Global Ramifications as the EU Finalizes its Stance

The EU’s decision to **finalizes** a comprehensive AI liability law is not merely a regional matter; it sends ripples across the international tech landscape. Often referred to as the “Brussels Effect,” the EU’s regulatory actions frequently set global standards, compelling companies worldwide to adapt to its rules if they wish to operate in the lucrative European market. This law is expected to be no different, influencing regulatory discussions and legislative efforts far beyond Europe’s borders.

Setting a Global Precedent as the EU Finalizes

As the EU **finalizes** its pioneering legislation, it effectively lays down a gauntlet for other major global players. Nations like the United States, China, the United Kingdom, and Canada have been closely watching the EU’s progress, each grappling with their own approaches to AI governance. While some, like the US, have historically favored a more innovation-centric, less prescriptive regulatory model, the EU’s decisive action may prompt a re-evaluation. Countries now face the choice of developing their own distinct frameworks or aligning with the EU’s standards to facilitate international trade and collaboration. The EU’s move to **finalizes** this law could accelerate a global race to establish AI governance, with different regions potentially adopting varying degrees of stringency. For example, some developing nations might see the EU’s framework as a robust template, while others might prioritize fostering nascent AI industries with lighter touch regulations, creating a complex patchwork of global rules.

The Tech Industry’s Adaptation and Response as EU Finalizes

For AI developers and companies operating globally, especially those with a footprint in the EU, the **finalized** liability law introduces significant challenges and opportunities. Compliance will require substantial investment in legal and technical teams, robust risk assessment frameworks, and ethical AI design principles. Companies will need to re-evaluate their product development cycles, ensuring that AI systems are not only effective but also transparent, explainable, and accountable from conception. This could mean increased development costs and slower market entry for some products. However, the law also presents an opportunity: companies that proactively embrace these regulations and build trustworthy AI systems may gain a competitive advantage by fostering greater consumer trust and demonstrating a commitment to ethical practices. The industry must now **finalizes** its strategies for adapting to this new regulatory reality, potentially leading to the development of new tools and services specifically designed to aid AI compliance, as discussed by industry forums like the AI Forum for Europe.

The Journey to Finalizes: A Legislative Marathon

The path to **finalizing** the EU AI Liability Law has been a monumental undertaking, reflecting the intricate nature of regulating a rapidly advancing technology. It highlights the complexities inherent in modern global governance and the determination required to bring a comprehensive legislative project to fruition.

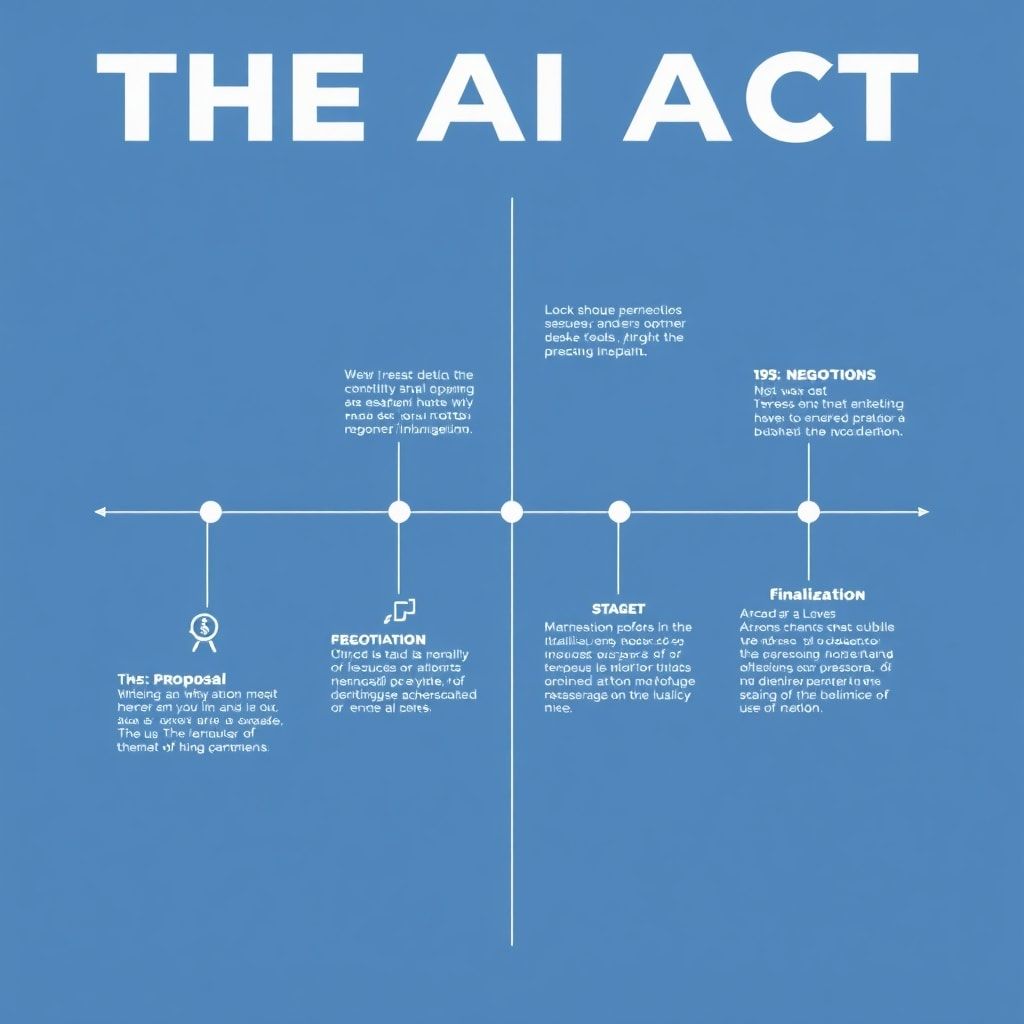

From Proposal to Promulgation: How the EU Finalizes

The legislative journey began years ago with initial proposals, driven by concerns over AI’s potential societal impact. What followed was a rigorous process involving numerous stages: expert consultations, impact assessments, and extensive debates within the European Parliament and the Council of the European Union. The “trilogue” negotiations—informal meetings between representatives of the European Parliament, the Council, and the European Commission—were particularly critical. These sessions involved intense bargaining and compromise to reconcile differing viewpoints among member states and parliamentary groups. Each amendment, each clause, was meticulously debated and refined to ensure clarity, enforceability, and fairness. The ability to **finalizes** such a complex piece of legislation, navigating diverse national interests and technological nuances, underscores the EU’s unique institutional capacity for consensus-building. This legislative marathon, marked by countless hours of deliberation, ultimately led to the text that the EU now **finalizes**.

Addressing Concerns and Debates as the EU Finalizes

Throughout its development, the AI Liability Law sparked considerable debate. Key concerns revolved around striking the right balance between fostering innovation and ensuring public safety and ethical standards. Issues such as algorithmic bias, data privacy, the extent of human oversight, and the potential for regulatory burdens to stifle technological progress were hotly contested. Civil society organizations advocated for stronger protections for individuals, while industry groups voiced concerns about overly prescriptive rules. The EU’s process to **finalizes** the law involved carefully weighing these competing interests, seeking solutions that would promote responsible innovation without compromising fundamental rights. For instance, the final text includes provisions for regulatory sandboxes to allow for responsible testing of AI systems, demonstrating an effort to support innovation while maintaining oversight. The dialogue surrounding these challenges continues even as the EU **finalizes** the law, highlighting the dynamic nature of AI governance.

Practical Implications and Future Outlook After EU Finalizes

With the EU having **finalized** its AI Liability Law, the focus now shifts to its practical implementation and long-term impact. This legislation is not merely a static document; it is a living framework that will evolve alongside the technology it seeks to govern, shaping the future of AI for years to come.

Impact on Businesses and Consumers After EU Finalizes

For businesses, particularly those developing or deploying AI systems within or for the EU market, the **finalized** law necessitates a significant overhaul of their operational procedures. This includes implementing robust AI governance frameworks, conducting thorough risk assessments, ensuring transparency in algorithmic decision-making, and investing in continuous monitoring and auditing of their AI systems. While this may entail increased compliance costs initially, it also presents an opportunity to build more resilient, trustworthy, and ethically sound AI products, potentially enhancing brand reputation and consumer loyalty. For consumers, the law promises enhanced protection and greater transparency. They will have clearer avenues for redress if harmed by an AI system, and increased access to information about how AI impacts their lives. This empowerment is expected to foster greater public trust in AI technologies, facilitating their broader adoption. The true impact will become evident as the law **finalizes** its transition period and companies fully integrate its requirements.

The Evolving Landscape of AI Governance After EU Finalizes

The EU’s AI Liability Law, while comprehensive, is not the endpoint of AI governance. Artificial intelligence is a rapidly advancing field, with new applications and ethical dilemmas emerging constantly. The legislative framework is designed to be adaptable, with provisions for future amendments or complementary regulations to address unforeseen challenges. The EU will continue to play a leading role in the ongoing global dialogue on AI, potentially influencing international standards and cooperation agreements. The successful implementation of this law could pave the way for further harmonization of AI regulations across different jurisdictions, fostering a more coherent global approach to AI governance. As the world continues to grapple with the implications of AI, the EU’s commitment to **finalizing** robust and responsible frameworks will remain crucial, setting a benchmark for how societies can harness technology safely and ethically. The continuous effort to refine and adapt these laws will be paramount as technology itself **finalizes** new capabilities.

Conclusion

The EU’s **finalization** of its world-first AI Liability Law in 2025 marks a monumental achievement in the realm of global technology governance. This legislative triumph demonstrates the EU’s proactive approach to regulating emerging technologies, setting a significant precedent for how AI will be developed, deployed, and held accountable worldwide. By clarifying liability, enhancing transparency, and prioritizing consumer protection, the EU **finalizes** a framework designed to foster trust and ensure the responsible advancement of artificial intelligence. While challenges remain for businesses in adapting to these new regulations, the long-term benefits of a more ethical and accountable AI ecosystem are undeniable. As other nations continue their own debates, the EU’s decisive action serves as a powerful testament to the importance of proactive governance in an age of rapid technological change. This project, now **finalized**, truly showcases mastering project completion on a global scale. We encourage businesses, developers, and policymakers alike to thoroughly understand these new regulations and actively engage in the ongoing dialogue to shape a safer, more equitable AI-powered future. Stay informed, stay compliant, and help us navigate this exciting new era responsibly.